Abstract:

Large-scale acquisition of exterior urban environments is by now a well-established technology, supporting many applications in search, navigation, and commerce. The same is, however, not the case for indoor environments, where access is often restricted and the spaces are cluttered. Further, such environments typically contain a high density of repeated objects (e.g., tables, chairs, monitors, etc.) in regular or non-regular arrangements with significant pose variations and articulations. In this paper, we exploit the special structure of indoor environments to accelerate their 3D acquisition and recognition with a low-end handheld scanner. Our approach runs in two phases: (i) a learning phase wherein we acquire 3D models of frequently occurring objects and capture their variability modes from only a few scans, and (ii) a recognition phase wherein from a single scan of a new area, we identify previously seen objects but in different poses and locations at an average recognition time of 200ms/model. We evaluate the robustness and limits of the proposed recognition system using a range of synthetic and real world scans under challenging settings.

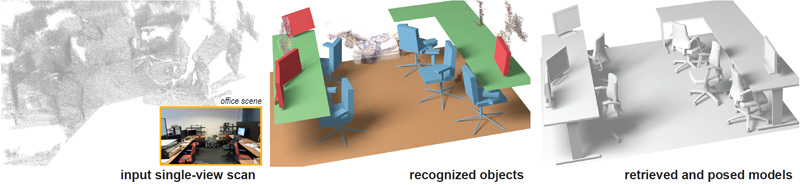

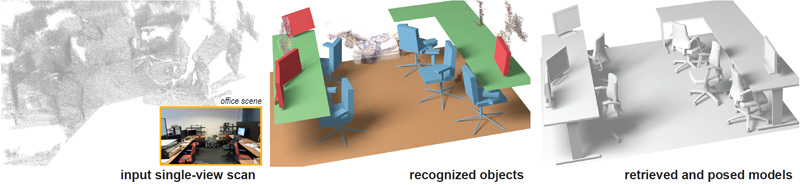

Recognition results on various office and auditorium scenes. Since the input single view scans are too poor to understand the scene complexity, we include scene images just for visualization (these were unavailable to the algorithm). Note that for the auditorium examples, we even detect the tables on the chairs — this is possible since we have extracted this variation mode in the learning phase.

Recognition results on various office and auditorium scenes. Since the input single view scans are too poor to understand the scene complexity, we include scene images just for visualization (these were unavailable to the algorithm). Note that for the auditorium examples, we even detect the tables on the chairs — this is possible since we have extracted this variation mode in the learning phase.

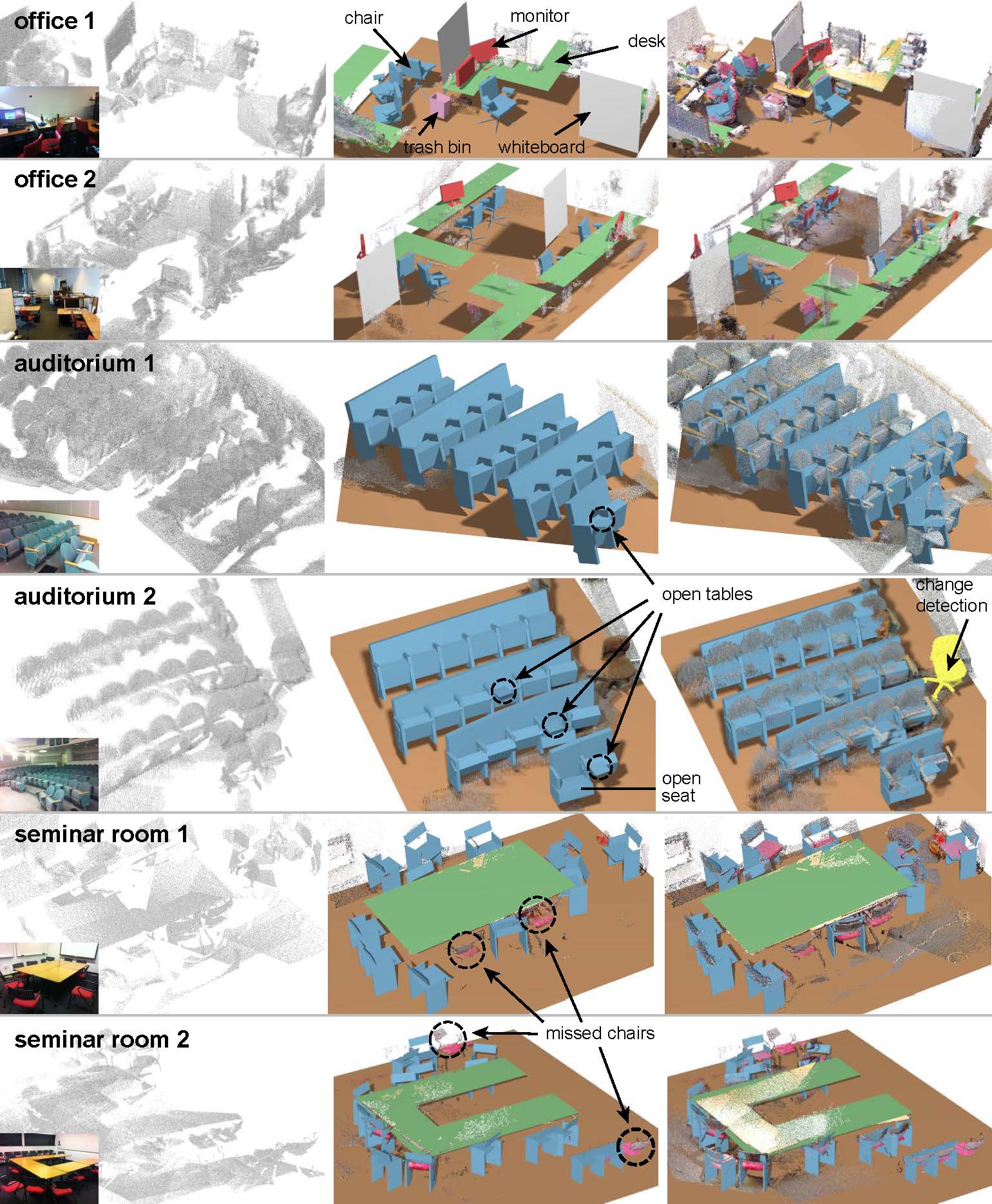

Recognition results on synthetic scans of virtual scenes: (left to right) synthetic scenes, virtual scans, and detected scene

objects with variations. Unmatched points are shown in gray.

Recognition results on synthetic scans of virtual scenes: (left to right) synthetic scenes, virtual scans, and detected scene

objects with variations. Unmatched points are shown in gray.

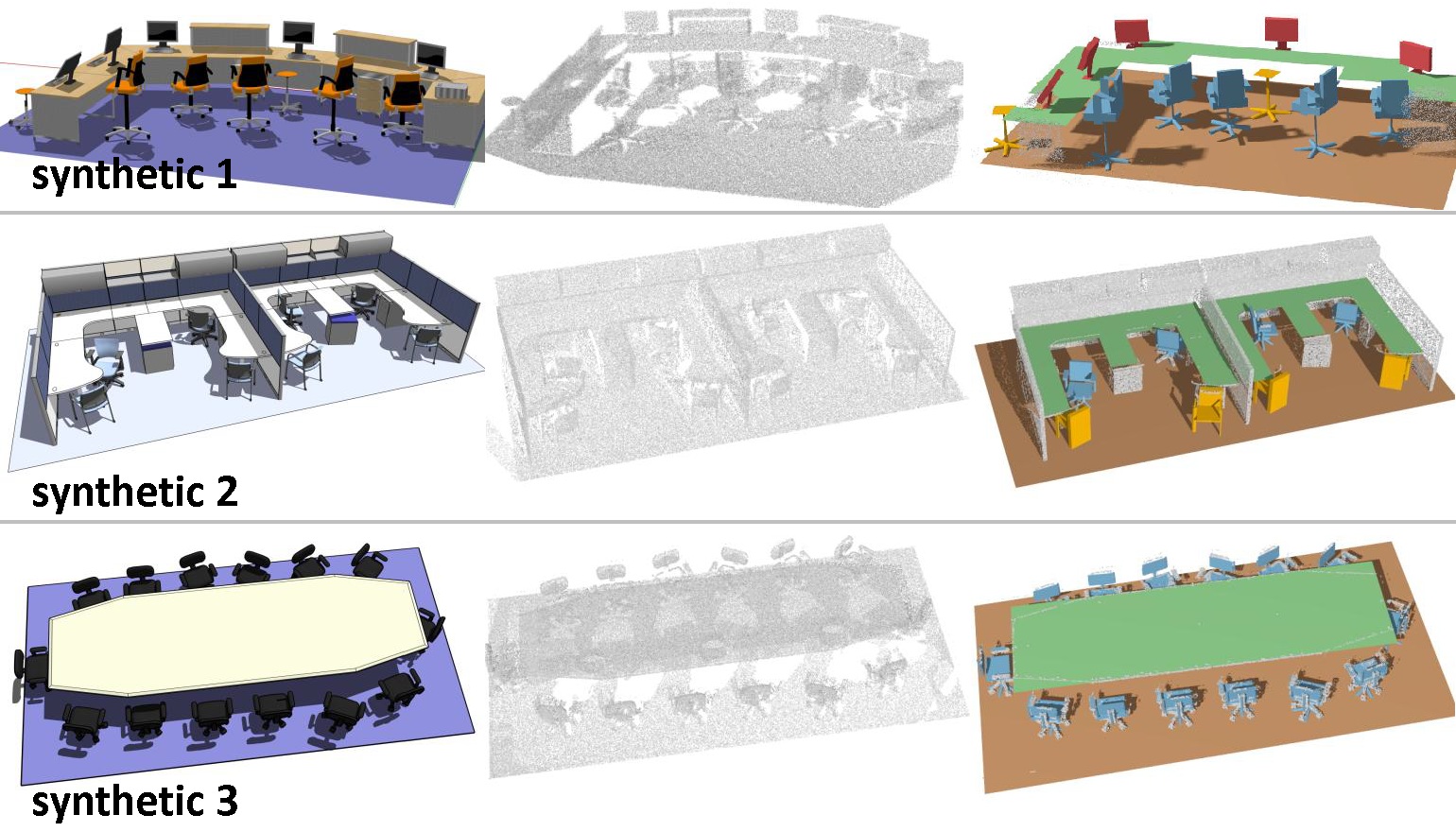

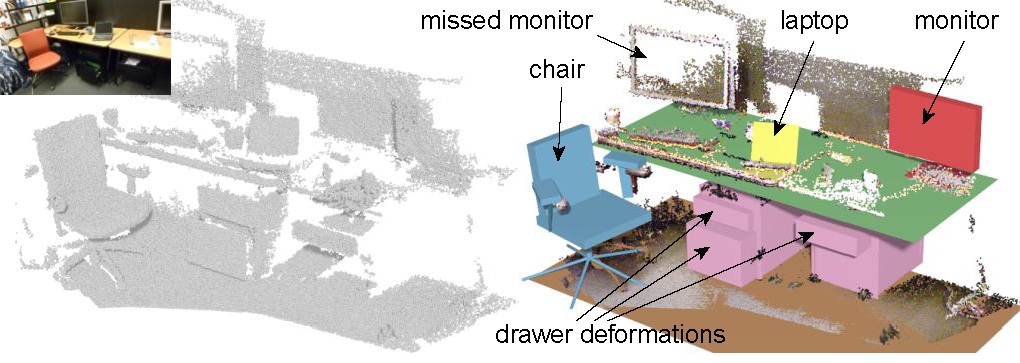

A close-up office scene. All of the recognized objects have one or more deformation modes. The algorithm inferred the angles of the laptop screen and the chair back, heights of the chair seat, the arm rests and the monitor. We can also capture the deformation modes of open drawers.

A close-up office scene. All of the recognized objects have one or more deformation modes. The algorithm inferred the angles of the laptop screen and the chair back, heights of the chair seat, the arm rests and the monitor. We can also capture the deformation modes of open drawers.

Data: data_learning and data_recognition.

Acknowledgements:

Bibtex:

@article{kmyg_acquireIndoor_sigga12,

AUTHOR = "Young Min Kim and Niloy J. Mitra and Dong-Ming Yan and Leonidas Guibas",

TITLE = "Acquiring 3D Indoor Environments with Variability and Repetition",

JOURNAL = "ACM Transactions on Graphics",

VOLUME = "31",

NUMBER = "6",

pages = {138:1--138:11},

articleno = {138},

numpages = {11},

YEAR = "2012",

numpages = {11},

}

|

|